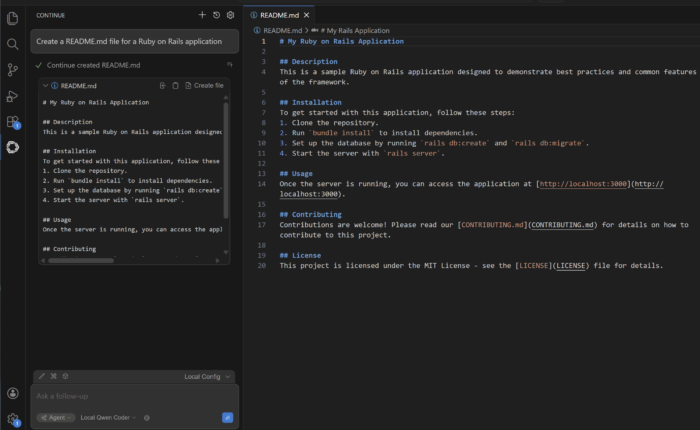

In Running LLMs in Intel Laptops we installed and ran an LLM in a laptop and use it as as your personal chatbot. Let’s push this further by using the LLM to help us write code by connecting our IDE (Integrated Development Environment) to the model.

In the previous article we installed the Qwen 2.5 7B Instruct model, although for our IDE use case we will be using another variant of the Qwen model called Coder. It is important to understand that a model can have several variants that is more suited for a specific task. For instance: